Amazon Rufus AI: Find Hidden Gaps in Your FBA Listings

Amazon Rufus AI is a conversational shopping assistant built into the Amazon mobile app and desktop search experience that answers natural-language buyer questions by pulling from product listings, customer reviews, Q&A sections, and web data simultaneously. Most FBA sellers have heard of it. Almost none have used it as a research tool for their own catalog.

That gap is the opportunity. Rufus does more than help shoppers decide what to buy. It reflects, in plain language, exactly what your listing fails to explain. Every non-answer, every vague response, every time Rufus reaches outside your product detail page to fill in context, is a signal that your content left a question unanswered. This guide shows you how to read those signals and act on them.

Key Takeaways

-

Amazon's AI shopping assistant Rufus, which expanded to all U.S. customers in 2024, answers buyer questions directly within the search experience, meaning it can influence purchase decisions before a shopper ever reads a product listing.

-

Rufus pulls its answers from listing content including titles, bullet points, descriptions, and Q&A sections, so gaps in that content directly reduce a seller's visibility in AI-generated responses.

-

Most FBA sellers have not adjusted their listing copy to account for how Rufus surfaces information, creating a competitive gap that early adapters can exploit.

-

Rufus is designed to handle comparison and use-case questions, which means listings that only describe a product rather than contextualizing it for specific scenarios are less likely to be cited by the assistant.

-

The questions Rufus fails to answer about a product reveal exactly which buyer objections a listing is not addressing, making it a diagnostic tool for conversion optimization.

-

Sellers who structure their content around natural-language buyer questions are better positioned to appear in Rufus responses than those optimizing solely for traditional keyword-based search ranking.

The Problem

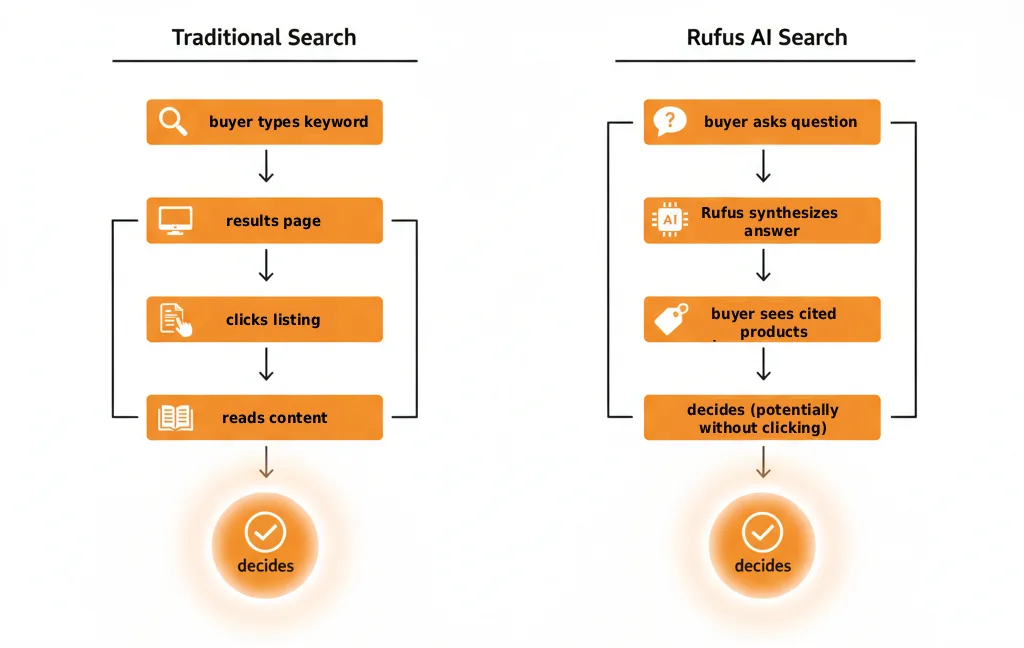

Most FBA sellers are optimizing for a version of Amazon search that is already becoming obsolete. The traditional mental model, where you stuff your title with keywords, load your bullets with features, and rank for high-volume search terms, was built around a system where buyers typed a query, scanned a results page, and clicked through to listings they evaluated themselves. Rufus changes that chain of events in a way most sellers have not fully reckoned with.

Rufus, which Amazon began rolling out to all U.S. shoppers in 2024, operates as a conversational layer sitting on top of the traditional search experience. Check Amazon Rufus launch announcement. A buyer does not have to click into your listing to start forming an opinion about your product. They can ask Rufus something like "what's the best protein powder for people who can't tolerate dairy" or "will this camping stove work above 10,000 feet" and receive a synthesized answer pulling from multiple listings simultaneously. If your listing does not contain content that directly addresses those questions, Rufus does not cite your product. The buyer may never see you at all.

The content gap sellers are not measuring

The core problem is that most listing audits focus on what content sellers have written, not on what questions that content fails to answer. A seller can have a fully keyword-optimized listing with a strong title, five complete bullet points, an A+ content module, and hundreds of positive reviews, and still be effectively invisible to Rufus on the questions that matter most to buyers at the decision stage. Feature-forward copy and question-answering copy are not the same thing, and the gap between them is where conversions quietly die.

Consider a concrete example. A seller listing a stainless steel travel mug might have bullets describing the double-wall vacuum insulation, the lid type, and the color options. That content serves keyword ranking reasonably well. But if a buyer asks Rufus whether the mug is safe for use with carbonated drinks, or whether the lid is truly leak-proof when packed sideways in a bag, or how long it actually keeps coffee hot in cold weather, and the listing never addresses those specifics, Rufus has nothing to pull from. It will either give a vague non-answer or, more likely, surface a competitor whose listing does answer those questions directly.

Why sellers have been slow to respond

Part of the problem is visibility. Unlike a drop in organic rank, there is no dashboard metric that tells a seller their product is being skipped by Rufus. The absence shows up indirectly, in flat conversion rates, in Q&A sections filling up with questions the listing should have already answered, in reviews that mention "I wasn't sure about X before buying." These are all signals that buyer questions are going unanswered somewhere in the purchase journey, but most sellers attribute them to pricing or photography rather than content architecture.

There is also a category-wide complacency problem. Because Rufus is relatively new, many sellers assume it is a minor feature used by a small segment of shoppers. That assumption is increasingly difficult to defend. Amazon has been actively promoting Rufus across the app and mobile experience, and conversational AI adoption in e-commerce tends to follow an adoption curve where early usage understates long-term behavioral shift significantly. Check Amazon Rufus usage and adoption data. Sellers who wait until Rufus usage is undeniable before adjusting their listings will have already ceded ground to competitors who treated it as a structural change worth preparing for now.

A Four-Step Framework for Rufus-Ready Listings

The most effective way to make your listings Rufus-ready is to stop writing for a search algorithm and start writing for a conversation. That shift requires a repeatable process, not a one-time listing refresh, because buyer questions evolve alongside product categories, seasonal use cases, and emerging competitor claims.

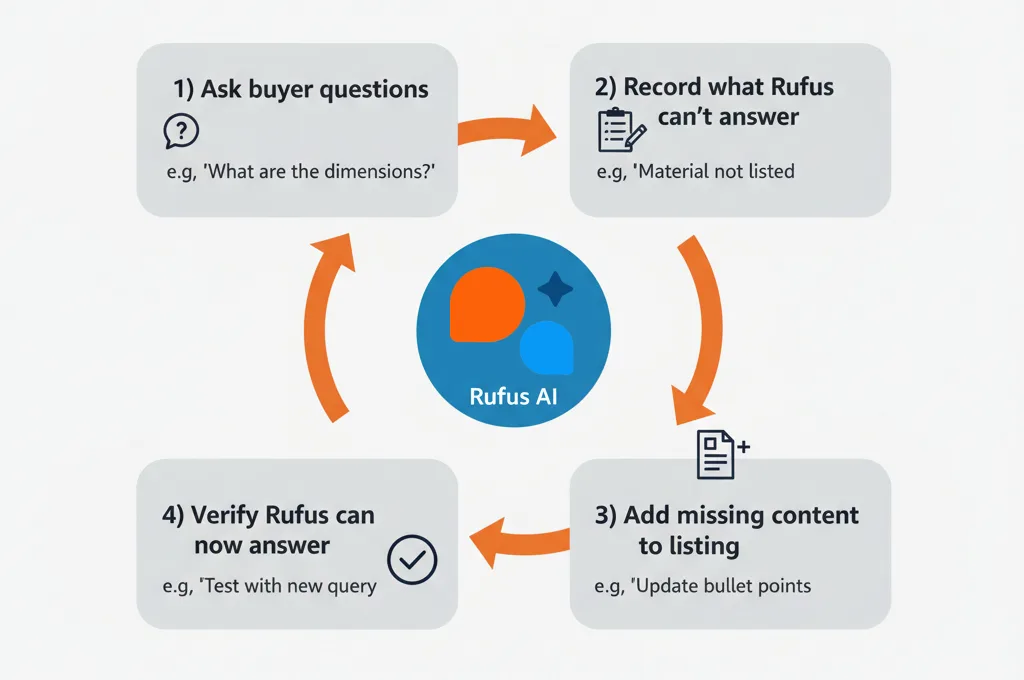

Step One: Extract the real questions buyers are asking

Before you rewrite a single sentence, you need a question inventory. This is a structured collection of every question buyers are actually asking about products in your category, pulled from sources that reflect unfiltered intent rather than assumed search behavior.

The sources to mine, in rough priority order:

-

Your own listing's Q&A section, which surfaces questions your existing content failed to answer preemptively

-

Competitor Q&A sections, especially for top-three and top-ten ranked products in your category

-

One-star and two-star reviews, which frequently describe unmet expectations rooted in unanswered pre-purchase questions

-

Three-star reviews, which often contain the most actionable nuance because buyers were partially satisfied but felt something was missing

-

Reddit threads, Facebook groups, and niche forums where buyers discuss your product type candidly (Check consumer research methodology for product listings)

-

The "Customers ask" feature in the Amazon browsing experience

Once you have collected at least thirty to fifty questions across these sources, group them by decision stage. Some questions are awareness-level (what is this product, who is it for). Some are consideration-level (how does it compare to alternatives, what are the limitations). The most important for Rufus optimization are decision-stage questions, the specific, situational questions buyers ask right before they add to cart or walk away.

Step Two: Score each question against your current listing

Take your question inventory and run it against your existing listing content systematically. For each question, mark whether your title, bullets, description, A+ content, or Q&A section answers it directly, answers it partially, or does not address it at all.

This scoring exercise almost always produces an uncomfortable result. Most listings answer feature questions adequately and decision-stage questions poorly. A listing might clearly state a product's dimensions, material, and included accessories while saying nothing about the situations where it underperforms, the compatibility edge cases buyers worry about, or the practical use details that only matter to someone who is seriously considering a purchase.

The gap score, meaning the ratio of unanswered decision-stage questions to total decision-stage questions, is your Rufus vulnerability number. A listing with ten unanswered decision-stage questions and no A+ content module has a structurally weak position for conversational AI retrieval regardless of how well it ranks in keyword search.

Step Three: Rewrite content around answer structures Rufus can retrieve

Rufus pulls synthesized answers from listing content, and the content it retrieves most reliably tends to be structured in a way that mirrors the question being asked. This does not mean literally writing "Q: Is this mug safe for carbonated drinks? A: Yes." It means building sentences and paragraphs that contain both the context of the question and the direct answer within a short, parseable block of text.

A bullet point that reads "double-wall vacuum insulation keeps beverages hot or cold" is feature copy. A bullet point that reads "keeps coffee hot for up to six hours in temperatures below freezing, based on testing at 20 degrees Fahrenheit" is answer copy. The second version responds to the actual question a buyer would ask Rufus before purchasing a travel mug for winter outdoor use. Check natural language processing and e-commerce content structure research.

Apply this rewrite logic to your five bullets first, then to your A+ content body paragraphs, and finally to your product description. Prioritize the questions that scored as completely unanswered in your gap audit, and specifically weight the questions that appeared in both your own Q&A section and competitor Q&A sections simultaneously. Those overlapping questions represent category-level buyer anxiety that Rufus is almost certainly being asked to resolve.

Step Four: Use Rufus itself as a feedback loop

Once your updated listing is live, query Rufus directly using the decision-stage questions from your inventory. Ask it the same questions a buyer in your category would ask. Note whether your product appears in the response, whether your listing is cited or paraphrased, and whether the answer Rufus gives accurately reflects your product's actual strengths. If Rufus is generating an answer that does not mention your product despite your listing containing relevant content, the phrasing may still be too feature-forward or buried too deep in the copy hierarchy for reliable retrieval. Iterate the language and test again over successive listing update cycles.

How FBA Sellers Are Winning and Losing with Rufus Right Now

Rufus is already reshaping purchase decisions in measurable ways, and the sellers who understand this concretely, through actual category behavior rather than abstract theory, are the ones adjusting their listings with urgency. The patterns below illustrate how the gap between feature copy and answer copy plays out across common FBA categories.

The travel mug seller who answered the wrong questions

A representative pattern in the drinkware category: a seller with a 4.4-star travel mug, several hundred reviews, and a well-optimized keyword title is losing Rufus-driven discovery to a newer listing with fewer reviews but denser situational content. When a buyer asks Rufus "what travel mug works best for cold weather hiking," the established seller's listing returns nothing usable because its bullets read like a spec sheet: 20-ounce capacity, 18/8 stainless steel, fits most cup holders, leak-proof lid. These answers respond to nobody's question. The competing listing states that the mug maintains liquid temperature in sub-freezing conditions, that the lid can be operated with gloved hands, and that condensation does not form on the exterior in humid conditions. Rufus synthesizes that second listing's content into a direct, confident answer. The first listing is invisible. Check Amazon Rufus AI assistant product discovery behavior.

The difference is not star rating, not review count, and not keyword density. It is that one listing was written to answer the question buyers type into Rufus before a weekend trip and the other was written to rank for search terms buyers type into the keyword bar. Those are different content jobs, and in 2026 both jobs are real and neither covers for the absence of the other.

The pet supplement brand that treats its Q&A like a graveyard

A pattern that appears consistently across health and pet categories is the abandoned Q&A section. Sellers in these categories often accumulate thirty to sixty buyer questions over a product's lifetime and answer them sporadically, with short responses like "yes it does" or "please contact us for details." These non-answers are not inert. They actively signal to Rufus that the listing cannot resolve buyer concern. When a pet owner asks Rufus whether a joint supplement is safe to combine with a specific prescription medication, Rufus draws on whatever answer content exists. A vague or absent answer means Rufus may pull a competitor's more detailed safety language instead, even if that competitor's product has an objectively weaker formulation.

The sellers winning in this space are treating every Q&A response as a mini content block. They are writing two to four sentence answers that include context, specificity, and the kind of reassurance that converts anxious buyers. A response that reads "this supplement does not contain NSAIDs or corticosteroids, making it compatible with most veterinary-prescribed medications, though we always recommend confirming with your vet for dogs on active treatment plans" gives Rufus a complete, citable answer. That response can surface in a buyer conversation even months after it was posted.

The kitchen gadget that lost to its own return rate signal

One of the less obvious dynamics in Rufus optimization involves products with elevated return rates in specific use cases. Rufus's synthesis capability means it can draw on review sentiment patterns when listing content is thin. A garlic press seller with a high return rate among buyers who expected it to work with unpeeled cloves was seeing Rufus responses that flagged this limitation, because dozens of reviews mentioned the issue explicitly while the listing never addressed it. The seller who added a single clear bullet specifying which garlic preparation methods the product was optimized for, and which it was not, effectively pre-empted the negative signal. Rufus began citing the product's honest, specific scope rather than surfacing the review complaint pattern. Transparency, in this case, outperformed omission.

These patterns reinforce the same underlying truth: Rufus rewards completeness, not polish. The listings it surfaces most confidently are the ones that have already done the work of answering buyer questions in plain, structured, situationally grounded language.

If you sell between $1M and $2M on Amazon and your listings were built for keyword tools that predate conversational AI, there is a reasonable chance Rufus is already routing buyers toward competitors who answered their questions more completely. The fix is a structured audit that identifies exactly which questions your content leaves open and closes them in order of buyer impact, not a full listing rewrite.

Amazify works with mid-market FBA brands to run this audit as part of a broader listing and content strategy. If you want to see where your catalog stands, reach out to us for a free audit and to learn how we approach it.

Action Checklist

-

Open the Rufus chat bar in the Amazon mobile app and search your own category before touching your listing. The questions Rufus surfaces organically are buyer intents your current content is almost certainly not addressing.

-

Audit your Q&A section before your bullet points. Rufus draws on unanswered customer questions as a gap signal. A thin or absent Q&A section suppresses your listing in conversational queries even if your keywords are strong.

-

Test at least three natural-language queries that match how a buyer describes their problem, not how you describe your product. If Rufus cannot answer those queries from your listing alone, rewrite the section that should have answered them.

-

Add use-case and occasion framing to your title, bullets, and A+ content. Rufus handles activity-based and event-based queries; sellers who write "for first-time runners" or "ideal for science-themed birthday gifts" give it more surface area to cite.

-

Treat every Rufus comparison answer as a competitor gap analysis. When Rufus places a competitor above you in a comparison response, note the specific claim it uses to justify that placement and add the equivalent claim to your own listing with supporting evidence in your reviews or specs.

-

Re-run your Rufus audit every 90 days. Rufus is updated continuously, and the questions it surfaces will shift as buyer language and category trends evolve.

Frequently Asked Questions

Amazon Rufus AI is a conversational shopping assistant trained on Amazon's product catalog, customer reviews, Q&A data, and public web content. For sellers, it functions as an unintentional audit tool: the quality and completeness of your listing content directly shapes how Rufus describes, compares, or omits your product in its responses to shoppers.

Rufus operates as a separate conversational layer rather than the traditional keyword-based search algorithm, so it does not directly change your search ranking position. However, richer listing content that helps Rufus answer buyer questions accurately can improve engagement signals such as click-through and conversion, which do feed back into organic ranking over time.

Rufus draws from your product title, bullet points, product description, A+ content, customer reviews, and the Q&A section on your product detail page. It also incorporates web data, which means if your listing is thin, Rufus may pull context from competitor pages or category-level sources instead of yours.

Open the Amazon Shopping app on your phone and tap the Rufus chat icon to start a conversation as a shopper would. On desktop, Rufus appears as a chat panel integrated into the Amazon search page. Both surfaces are worth screenshotting before and after you run your audit queries so you can document what the responses look like against your current listing state.

Indirectly, yes. Rufus surfaces the language real buyers use when they are not sure of a product name, such as occasion-based queries, comparison questions, and problem-framing requests. These conversational phrases rarely appear in traditional keyword tools but represent genuine purchase intent. Mining Rufus for this language and then incorporating it naturally into your listing gives you coverage that keyword-only research misses.

The fundamentals overlap but the emphasis shifts. Standard search optimization prioritizes keyword placement and indexing. Optimizing for Rufus requires content that answers complete questions in plain language, because Rufus synthesizes answers rather than ranking pages. A listing that answers "is this waterproof?" in the bullets will surface better in a Rufus response than one that merely includes "waterproof" as a keyword without context.

Ready to stop leaving money on the table?

Get a free margin audit and see exactly how much profit you're missing.

Book Your Free Audit →